by Dimitrios Skarlatos on May 19, 2026 | Tags: AI Agents, Hardware-Software Co-design

Architecture & Systems are Changing: The Architect’s Role in the Era of Agentic Co-Design The AI datacenter stack is built on hardware-software contracts and abstractions that were never designed for the workloads datacenters now serve. Memory systems strain...

Read more...

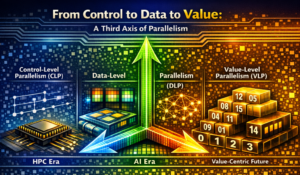

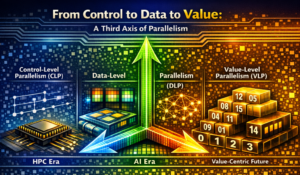

by Di Wu, Zhewen Pan, Joshua San Miguel on May 13, 2026 | Tags: AI hardware, Parallelism

TL;DR: The history of parallel computing is a history of shifting what we put at the center of the computer. The first axis, control-level parallelism (CLP), is control-centric and schedules around the program counter: it gave us the high-performance computing (HPC)...

Read more...

by Helen Wright, Jeff Dean, Mark D. Hill, and Dave Patterson on May 4, 2026 | Tags: AI, Computer Systems

Editor’s Note: this post is a republication of CRA-I post available at: https://cra.org/industry/2026/04/27/how-ai-will-reshape-computer-systems-by-2035-a-jeffersonian-dinner-in-san-francisco-about-our-10000x-future/ CRA-Industry (CRA-I) recently continued its...

Read more...

by Digvijay Singh on Apr 29, 2026 | Tags: Data Prefetcher, Memory Wall

This article continues (and concludes) the discussion on the proceedings of DPC-4, covering the remaining four contestants and a summary of the trends observed in all eight prefetchers presented in the championship. Similar to Part I, we focus on how each prefetch...

Read more...

by Digvijay Singh on Apr 27, 2026 | Tags: Data Prefetcher, Memory Wall

This article is the first in a two-part series that summarizes the key contributions of 4th Data Prefetching Championship (DPC-4), held in conjunction with the 32nd iteration of HPCA in 2026. While discussing innovative data prefetching techniques presented in this...

Read more...

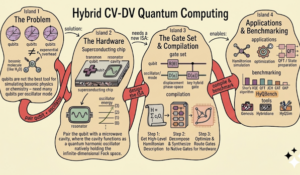

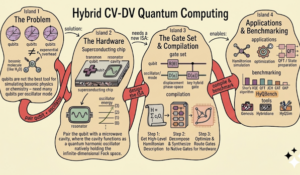

by Yuan Liu, Zihan Chen, Shubdeep Mohapatra, Jim Furches, Zheng (Eddy) Zhang, Huiyang Zhou on Apr 20, 2026 | Tags: Quantum Computing

Hybrid continuous-discrete-variable (CV-DV) quantum computing combines oscillators and qubits to tackle problems that are difficult for either model alone, from bosonic simulation to quantum error correction. At ASPLOS 2026, our tutorial introduced the foundations,...

Read more...

by Karu Sankaralingam on Apr 10, 2026 | Tags: Architecture, Evaluation, Machine Learning

For decades, we have designed chips in fundamentally the same way: human intuition applied to a vanishingly small slice of an impossibly large design space. That paradigm worked when Moore’s Law was lifting everything. We could afford to be wrong. We could...

Read more...

by Adnan Rakin on Apr 6, 2026 | Tags: deep neural networks, Security, side-channels

Years ago, I came across three pioneering works (CSI-NN, Cache Telepathy, and DeepSniffer) in the field of reverse engineering neural networks that inspired my journey into side-channel attacks to uncover the secrets of modern Deep Neural Networks (DNNs). Fast forward...

Read more...

by Sai Srivatsa Bhamidipati on Mar 12, 2026 | Tags: Accelerators, deep neural networks, Machine Learning

The debate of sparsity versus quantization has made its rounds in the ML optimization community for many years. Now, with the Generative AI revolution, the debate is intensifying. While these might both seem like simple mathematical approximations to an AI researcher,...

Read more...

by Dmitry Ponomarev on Feb 3, 2026 | Tags: Blog, Editorial

As we close the book on 2025, Computer Architecture Today has seen another successful year of community engagement. We published 29 posts covering a wide spectrum of topics—from datacenter energy-efficiency to the evolving debate on LLMs in peer review, alongside trip...

Read more...